Content production has always been shaped by the tools available to creators. Typewriters gave way to word processors, analog recorders evolved into digital workstations, and photochemical film was replaced by sensors and software. Each technological shift changes not only how creators work but also what they imagine as possible. In recent years, voice synthesis has emerged as one of those expanding frontiers, enabling new forms of expression and experimentation. As professionals explore generative audio, interest in services like ElevenLabs AI Voices often enters conversations not as a gimmick but as a way to prototype ideas, dramatize narratives, and rethink the role of vocal performance in digital media.

This shift does not suggest that voice AI will replace human creativity. Rather, it highlights how emerging technologies expand the palette available to content makers. In fields ranging from podcasting to animation, synthetic voices provide a way to iterate quickly, explore conceptual variations, or imagine alternative ways of delivering information without the logistical overhead of traditional recording sessions. In this context, voice AI becomes a medium to be engaged with thoughtfully rather than merely adopted.

Creative iteration and voice experimentation

One of the most immediate impacts of voice AI is how it affects experimental workflows. In traditional audio production, capturing voice involves scheduling talent, booking time in a studio, and managing sound quality through controlled environments. These steps, while essential in many professional contexts, can slow down early creative phases when the goal is exploration rather than final production.

AI voice tools reduce some of these barriers by allowing creators to experiment rapidly with different vocal styles, tones, and performance cues. Instead of recording and re-recording with human actors, producers can test narrative rhythms and character voices in seconds. This capacity accelerates iteration, enabling teams to quickly evaluate whether a piece feels more serious, whimsical, authoritative, or conversational simply by switching the voice profile.

This doesn’t diminish the value of human voice work, but it does reorganize when and how that human input enters the process. Early drafts can be sketched in sound without waiting for scheduling availability, which can make room for more substantive creative decisions later in the workflow.

Accessibility and narrative reach

Voice has always been a core dimension of storytelling. It conveys emotion, pacing, and nuance in ways that text alone cannot. AI-generated voices broaden access to these expressive dimensions for creators who may not have the resources to engage professional narrators or voice actors. Independent podcasters, educators, and multimedia artists can explore vocalized content without the barriers of cost and logistics that traditionally make audio production prohibitive for small teams.

At the same time, synthetic voices can be an inclusive tool. For content that aims to reach diverse audiences, being able to adjust accent, timbre, and delivery style can help make material feel more relatable to different listener groups. This is especially relevant in educational content, where how something is said can influence comprehension and engagement as much as the underlying message.

In this broader landscape, voice AI isn’t a substitute for natural speech but an extension of expressive possibility, a way to tailor tone and pacing to match context rather than a one-size-fits-all default.

Ethical questions and creative responsibility

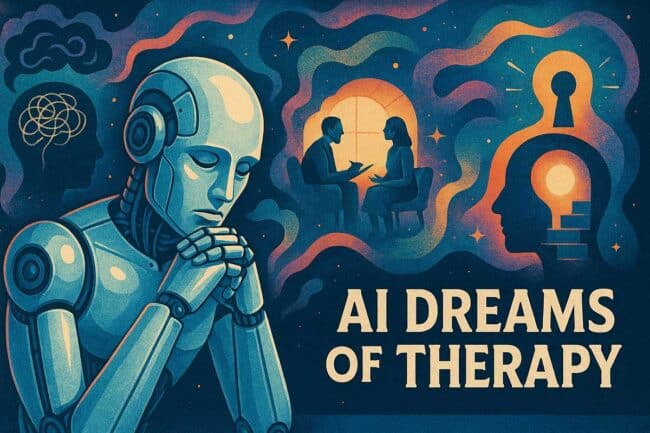

The emergence of voice AI also raises important ethical questions. As tools become more capable of mimicking human inflection and style, creators must confront matters of consent, representation, and authenticity. These concerns are not hypothetical; they intersect with ongoing debates about deepfakes, impersonation, and the broader implications of synthetic media.

Thinking through these questions requires nuance. On the one hand, voice AI can democratize creation. On the other hand, it can blur lines between original performance and generated output. Responsible usage involves being transparent about how voice AI is deployed in a given piece of content, and understanding the expectations of audiences who may assume they are hearing a human performer.

These conversations are developing alongside the technology itself, and they shape norms that will affect how voice synthesis is integrated into professional and artistic ecosystems.

Collaboration rather than replacement

One of the most useful ways to frame the rise of voice AI is not in terms of competition with human creators, but in terms of collaboration. Tools do not have agency on their own; they reflect the choices of the people using them. When producers approach voice synthesis as a partner in exploration, a way to sketch ideas, test concepts, or imagine alternatives, the technology becomes an asset rather than a threat.

Human voice work remains integral to projects that require emotional depth, cultural nuance, and stylistic subtlety. AI can assist in getting to those moments faster, but it seldom replaces the craft and intentionality that define compelling audio content. In many workflows, the two elements are complementary, with AI serving as a starting point and human performers refining what comes next.

Impact on professional practice

As voice AI tools mature, their influence on content production practices continues to evolve. Some teams adopt synthetic voices for prototyping, others for draft narration, and still others for accessibility testing. In all these scenarios, the technology’s role is shaped by the creative goals at hand rather than by an assumed dominance of automation.

Professional practice adapts by incorporating these capabilities where they provide value and deferring to traditional methods where they do not. This selective integration reinforces the idea that voice AI is not a universal solution, but a conditional resource, useful in certain contexts and inappropriate in others.

Looking ahead

Voice AI represents a new layer in the evolving toolkit of modern content production. It encourages creators to think about voice not just as a recorded asset, but as a flexible parameter that can be shaped early in development. This reframing changes how narratives are constructed and how prototypes are evaluated, opening avenues for experimentation that were previously locked behind logistical overhead.

As content ecosystems grow richer and more diverse, the creative role of voice AI will likely continue to expand, not as a replacement for human performance, but as a medium for creative iteration and exploration. The value lies not in the novelty of synthetic sound but in the way it invites creators to redefine what voice can do in the digital age.